AI Can Take Over the Code. It Can’t Take Over the Risk. Yet.

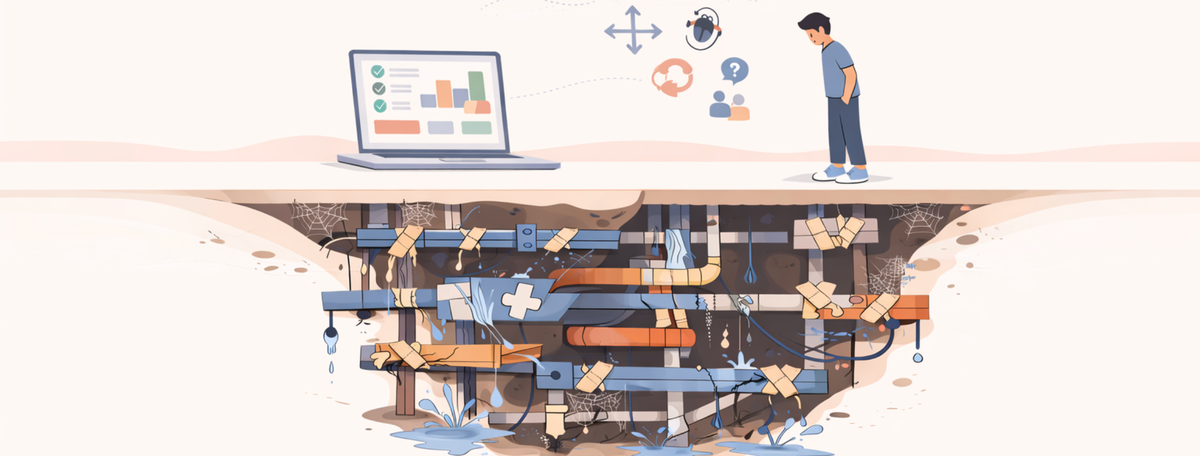

AI is reducing implementation cost, but the expensive part of software was always the risk carried by poor structure, poor assumptions, and poor handoffs.

A vibe-coded tool can look finished long before a system is trustworthy. The hard part is not getting something to work once. The hard part is knowing it will keep working when customers, teammates, bad inputs, and changing requirements hit it from different angles.

Then the questions start:

It works for the normal case. What happens when a customer does something we didn’t expect?

We need to add a feature, but it contradicts how the rest of the system was built. How do we insert it without breaking what already works?

The AI built it. The AI can’t figure out why it broke. Now what?

The person who set this up is leaving. Can anyone else pick it up?

We asked the AI to fix a bug and it introduced three more. How do we get out of this loop?

We think this is 90% done. How do we know it’s not actually 10%?

That is the real handoff point. Not when the prototype works. When the business has to depend on it.

Most business leaders turned vibe coders do not know what they do not know. That is why so many prototypes feel impressive right up until they become operational. AI demos are deceptively good, and the gap between “this looks finished” and “this is safe to run” stays invisible until real usage starts.

This is not an anti-AI argument

AI-assisted development is real. The gains are real. Teams are building faster with Cursor, Claude, Lovable, and Copilot. Founders and ops leads are right to experiment. They should be doing it.

The problem is not that AI can’t produce output. It can. The problem is that implementation is only one layer of a working system.

A founder can prompt their way to a dashboard, an internal tool, or an MVP in a fraction of the time it used to take. That changes the economics of building. It does not remove the need for someone who understands what should be built, how the business process should be translated, and where the risk will show up later.

Because once the first version exists, the question stops being “can we build this?” It becomes “can we trust this?”

Vibe coding is no-code’s sequel. Same plot.

We have seen this before. The tool is different. The failure mode is not.

Airtable, Zapier, and Notion made building accessible to non-engineers. Ops leads built CRMs. Marketing teams built workflow engines. Founders stitched together internal systems without writing code.

A lot of those systems worked. Until they had to scale. Until the original builder left. Until a key integration failed silently. Until the business needed auditability, permissions, error handling, or a clean change process.

A client came to us with a 40-step Zapier and asked us to document it so they could maintain it. That was not the real job. It needed to be rebuilt as five separate automations. Another had a Make.com workflow handling six branches in one scenario. Fine until branch seven appeared. Then the whole thing had to be restructured.

The issue was never access to tools. The issue was whether the result could survive real use. We wrote about this in Build Automation Systems That Don’t Break at 2 AM.

Vibe coding replays that pattern at higher speed and higher stakes. The outputs are more impressive now. The gap is still the same.... maybe wider.

The translation layer hasn’t changed

Here is what most vibe coding content gets wrong: the bottleneck was never typing code. The bottleneck was knowing what to tell the tool to do.

When a founder prompts an AI to “build me a client portal,” the AI does not ask:

- How should contacts relate to companies? One-to-many? Many-to-many?

- What happens when two users edit the same record?

- Which fields should be visible to clients versus internal staff?

- How do you handle a client with two active contracts and different billing terms on each?

- Where does this data go if you outgrow the tool in 18 months?

The AI will produce something. It will look right. It will feel like progress. But every unanswered question becomes a structural flaw that shows up later as bad data, broken workflows, brittle permissions, or a system nobody trusts enough to extend.

Someone using Claude Code or Cursor will write code faster than someone who isn’t. That is real. But someone who does not know how to code will still produce a weaker result than someone who does, regardless of how good the AI assistant is. The tool accelerates the person. It does not replace what the person knows.

This is the translation layer — the gap between what a business needs and what a tool produces. Prompting Cursor and configuring Airtable are different skills. But the translation problem is the same: someone has to understand the business process well enough to model it correctly.

We see the same mistake in no-code buying decisions too. Teams look for a tool before they have mapped the process. That is why pieces like Stop Looking for a CRM: Here’s where to start matter before the build starts.

The tool changed. The gap didn’t.

AI can take over the code. It can’t take over the risk.

Where teams actually get stuck

The teams we talk to are usually not blocked at the start. They are blocked after progress.

They have built something useful. Maybe a search layer. Maybe an internal admin tool. Maybe a client portal. Maybe a process wrapped in prompts and automation. It does enough to prove the idea.

Then confidence drops.

Not because the team suddenly doubts AI. Because the next problems are specific:

- The portal works for 10 clients. Client #11 has two contacts at the same company and the system creates duplicate records.

- An ops lead adds a new field. Three automations break silently because they depended on a value that no longer exists.

- Someone asks, “Where does this data go if a deal falls through?” Nobody knows.

- A teammate tries to modify a vibe-coded feature. The AI suggests a fix that contradicts the existing data model. Now there are two conflicting patterns in the same system.

At that point, the team is not asking for more code. They are asking for someone to look at what exists and tell them what is solid, what is fragile, and what has to change before the system becomes something the business can rely on.

That is the point of expert help. Not more output. Less risk.

Code is cheap. Consequences are not.

Most conversations about AI replacing developers focus on code generation. That is too narrow.

Writing code is one part of software delivery. Often not the hardest part. The harder part is everything around the code: what the system should do, how data moves, what happens when assumptions fail, how access is controlled, how failures are detected, how changes are made without damage, and how someone else takes over later.

Those are not coding questions. They are risk questions.

A system is not reliable because it exists. It is reliable because someone has made deliberate decisions about structure, constraints, ownership, monitoring, and recovery. That is the difference between a demo and production. One proves something can work. The other proves the business can live with it.

We wrote about this in Before You Add AI, Build the System. Most businesses do not fail at AI because they misunderstand AI. They fail because they mistake AI for the system itself. That same pricing gap shows up when buyers compare implementation-heavy proposals with work that is really about failure prevention, architecture, and risk allocation.

Speed does not make a system safer

AI lowers the cost of production. It does not lower the cost of being wrong.

The faster you can build, the faster you can create hidden debt. A rushed fix can distort the architecture. A generated patch can solve the visible bug and introduce three invisible ones. A feature can be added in a way that works today but blocks every sensible change after it.

The loop becomes familiar:

prompt → patch → test → break → repeat

The issue is not that AI is sloppy by definition. The issue is that teams confuse generated output with finished thinking.

AI made demos cheap. It did not make production systems cheap. It made it easier to reach the risk layer faster.

If you are a good coder, AI will amplify your skills.

If you're prompting without thinking, AI will build without thinking too.

And even in newer tools, that same pattern shows up again. Zite Is Fast. The Last 10% Is Still the Hard Part makes the same point from the app layer: getting to something that works is faster now. Turning it into something dependable is still the real work.

What companies are actually paying for

When teams hire a technical partner, they often assume they are paying for implementation.

They are not. Not mainly.

They are paying for decisions under uncertainty. For architecture that survives change. For failure planning before failure happens. For someone who can tell the difference between a local fix and a structural problem. For accountability when the system matters and something breaks. And for knowing, through experience, where to hit it with the hammer.

“I did like 57 different demos, right? Like every single tool or system that’s available and none of them work.” The issue was not finding software. The issue was finding something flexible enough to support how the brokerage actually operated.

When implementation gets cheaper, the remaining value becomes easier to see. The value is not in typing code. It is knowing where the weak points are before they turn into outages, rework, or bad data. That's why McKinsey and SAP and the terrible Workday are still in business.

Software buyers are not paying for code. They are paying for someone to absorb complexity and make the system safer to depend on.

What most growing companies actually need

Most businesses do not need a full engineering department on day one. They do not need a full-time CTO before the work justifies it.

They need experienced technical judgment in the gaps AI cannot close.

They need someone who can assess what exists, make architectural decisions, reduce fragility, and turn a promising prototype into an operable system. Someone who can step in between founder ambition and production reality and make both legible.

The model that makes sense for most teams is simple:

Use AI to move fast. Use experienced judgment to keep that speed from turning into damage.

That is not a premium add-on. Once the system matters, it is baseline.

The bottom line

AI is making implementation faster, cheaper, and more accessible. It is not making reliability automatic.

The companies that benefit most will not be the ones that generate the most output. They will be the ones that pair speed with structure, and experimentation with accountability. They will use AI where it helps, then bring in judgment where the risk starts.

Not in getting something to run. In knowing it will keep running.

And when the questions at the top of this piece start sounding familiar, that is usually the moment to stop prompting and start asking whether the system can be trusted.

We have done this across 200+ projects and 100+ businesses over six years. 70% come back for more work. Not because we lock anyone in – we hand over everything – but because the judgment is what they needed in the first place.

The Blueprint is where it starts. One week. We map what you have built, what is solid, what is fragile, and what has to change before the business depends on it.