Before You Add AI, Build the System.

Most businesses do not fail at AI because they misunderstand AI. They fail because they mistake AI for the system itself. Structured data, automations, and interface design still come first.

Every other week, someone asks us to “add AI” to their business.

Once we dig into what they mean, the request usually comes down to one idea: make it so I do not have to think about this anymore.

That is not an AI problem. It is a systems design problem. And the confusion between the two is where the money gets wasted.

We have built enough systems, operational apps, hiring tools, portals, automation pipelines, to know that AI is genuinely useful. But it is useful in a specific way, at a specific layer, doing a specific job. It is not the foundation. It is not the database. It is not the automation engine. It is not the UI.

It is a layer. And most business owners, through no fault of their own, do not know where that layer sits.

The confidence problem is not stupidity. It is marketing.

AI demos are deceptively good.

You type a prompt, get an impressive answer, and the instinct kicks in: if it can do this, it can probably run that. The jump from “AI summarized my meeting notes” to “AI should run my operations” feels natural.

It is also wrong.

We recently had a call with a client building a distribution business. She had spent the weekend researching AI agents and came in energized. She wanted agents for pricing, compliance, market research, logistics, and lead follow-up. In her words: “I really think that each one of the tasks can pretty much be trained for the agent... I was geeking out over this over the weekend and just got really excited once I just started seeing kind of what these resources were.”

That is not a ridiculous reaction. It is the predictable outcome of spending a weekend chatting with Claude. The tools look like they can do everything. The gap between the demo and production is invisible unless you have built production systems before.

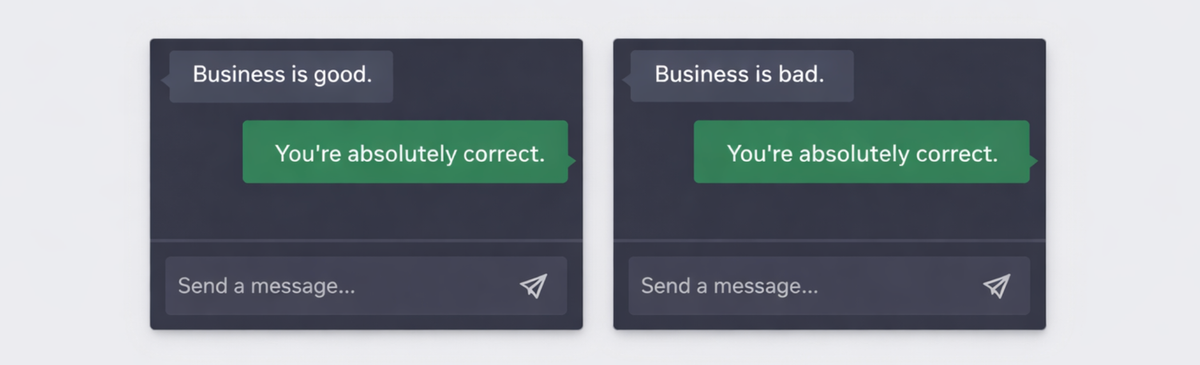

And AI has been designed by testing what kind of responses users prefer. And it seems users prefer sycophancy.

The Dunning-Kruger effect shows up here in a specific and expensive way. Overconfidence introduces real risks: tech debt, data privacy leaks, brittle workflows, and AI-generated code with vulnerabilities. Organizations push forward thinking they are accelerating, while missed deadlines and cleanup costs accumulate underneath.

But the overconfidence is not random. It is manufactured.

Vendors, investors, and media outlets have helped build a narrative in which AI is presented as the answer to nearly every business problem. That narrative has driven overreach in both implementation and adoption. AI has real value in some settings. It has also been forced into products and workflows where it adds cost, complexity, and technical debt without solving the underlying problem.

Business owners are experts in their domain. That is not the issue. The issue is that AI marketing tells them they no longer need systems architecture.

That is false.

The numbers confirm it

Pause on the data for a moment, because the pattern is hard to ignore.

MIT’s NANDA initiative report, The GenAI Divide: State of AI in Business 2025, found that only a small share of AI pilots produced rapid revenue acceleration. Most stalled before delivering measurable impact on profit and loss.

S&P Global’s 2025 survey of more than 1,000 enterprises found that 42% of companies abandoned most of their AI initiatives that year, up from 17% in 2024. The average organization scrapped 46% of its AI proof-of-concepts before they reached production.

RAND Corporation’s analysis found that more than 80% of AI projects fail, roughly twice the failure rate of non-AI technology projects.

Why do they fail?

Most failed AI initiatives do not collapse because the models are useless. They fail because the surrounding system is weak. The technology is not tied to measurable business outcomes, not integrated into real workflows, or not supported by the people expected to use it.

McKinsey’s 2025 AI survey points to the same pattern: organizations reporting significant financial returns were much more likely to have redesigned end-to-end workflows before choosing modeling techniques. Generative AI has not removed an older truth about machine learning work: 80% of AI is data preparation.

Read that again. Data preparation. Not model selection. Not prompt engineering. Not AI workflows.

The system underneath.

AI cannot hold your whole business. That is an architectural fact.

This is the part nobody wants to hear.

Today’s frontier models offer context windows in the range of hundreds of thousands to a few million tokens. That sounds enormous until you compare it to an actual business. Production systems are not a single prompt. They are years of decisions, thousands of records, entity relationships, role-based permissions, historical exceptions, and branching workflows. A large context window does not change that mismatch.

And even within that window, performance often degrades before the stated limit. A model may advertise a large maximum context size, but practical reliability can drop well before the ceiling. Performance does not degrade neatly. Retrieval gets inconsistent. Important details fall out of attention.

Chroma’s research on context rot makes the point directly: performance becomes less reliable as input length grows.

On that same client call, our team explained it this way: “Any model right now, off the shelf, can easily handle somewhere around 200,000 tokens... and if you fill that bucket with more than like 40%, it starts hallucinating things. It predicts things that an expert would not predict.”

Think about what that means for your business.

Your business is not a single prompt. It is years of decisions, thousands of records, dozens of relationships, permissions, historical context, edge cases, and branching workflows. No context window holds all of that at once. Not today. Not at 1 million tokens. Not at 10 million.

That is why you need a database. That is why you need structured data. That is why automations exist. That is why AI sits on top of the system rather than underneath it.

Here is the analogy we use with clients: AI is like a very smart consultant you hired for a single meeting. They will synthesize whatever you put in front of them. But they do not remember last week’s meeting. They do not have access to your filing cabinet. They do not know what happened last quarter unless you hand them the right file, in the right format, at the right time.

That “handing” is the real work.

And it is not AI work. It is systems work.

There is also a cognitive cost nobody talks about

There is another dimension here that matters for business owners specifically.

An MIT Media Lab study explored the neural and behavioral consequences of LLM-assisted writing. Participants were divided into three groups: LLM, search engine, and brain-only. Each completed three sessions under the same condition.

The results were not subtle. Over time, participants in the LLM group underperformed on several neural, linguistic, and behavioral measures. The researchers used the term “cognitive debt” to describe the possibility that repeated reliance on AI systems may weaken some of the processes involved in independent thinking.

For a business owner making architectural decisions, that is a bad combination: a tool that increases confidence while also reducing some of the thinking needed to spot its limitations.

We do not bring this up to scare anyone. We bring it up because it explains a pattern we keep seeing. A business owner uses ChatGPT or Claude to draft requirements, evaluate tools, reason through architecture decisions, and compare vendors, then acts surprised when the final system falls apart under real usage.

The AI did not fail.

The thinking behind the decision was outsourced to the same tool being evaluated.

What a real business system actually needs

This is the part most AI content skips.

A working business does not run on AI. It runs on four layers, and AI is only one of them:

| Layer | What it does | Examples |

|---|---|---|

| Structured Data | Holds the truth: records, relationships, history, permissions | Airtable, Postgres, Notion databases |

| Automations | Moves data between systems reliably, with triggers, staging, and error handling | n8n, Make, Zapier, Airtable Automations |

| UI / App Layer | Where people interact with the system: forms, dashboards, portals, views | Zite, Softr, Bubble, custom React apps |

| AI Layer | Summarization, classification, triage, extraction, drafting, search | OpenAI, Claude, streaming AI workflows |

No organization is “fully AI.” Claims like that usually mean the speaker does not understand where the data lives.

We usually do not start with AI. We start with the business system. Once the data is structured, the automations are reliable, and the UI supports the real workflow, AI becomes much easier to add in sensible ways. Before that, it is just noise on top of a mess.

We wrote about this approach in AI in SMBs Needs Three Things: Context, Context, and Context. Most SMB AI work disappoints for a boring reason: the information behind it is fragmented. The model is not the problem. The system is.

The 80/15/5 rule

Here is a rough ratio we have seen across our projects:

About 80% of the work: structured data and automations.

This is the boring part. It is also where most of the operational value lives. Getting records right, linking entities correctly, building pipelines that handle edge cases, and designing for failure. Skip this, and nothing after it works.

We covered this in Build Automation Systems That Don’t Break at 2 AM. The difference between an automation and a system is whether you can describe what happens when it fails. If the answer is “I don’t know,” you have an automation. If the answer includes staging tables, retry logic, and error alerts, you have a system.

About 15%: the UI and app layer.

This is the interface people actually use. Some of it can now be scaffolded by AI tools. We wrote about that experience with Zite in Zite Is Fast. The Last 10% Is Still the Hard Part. The scaffolding is good. The last-mile engineering, sync behavior, offline tolerance, state management, performance, is still systems work.

About 5%: AI, strategically placed.

Summarization. Triage. Classification. Extraction. Content drafting. Search enhancement. Streaming responses in a user-facing assistant. These are controlled points where AI helps with something specific. Not “AI everywhere.” Not “let the model decide.” Specific, scoped, useful.

That ratio is not a formula. It shifts depending on the business. The direction does not.

The system comes first. AI comes last.

Where AI actually helps, and where it creates noise

AI is useful for:

- Summarizing meeting notes or long threads into action items

- Classifying incoming requests such as support triage, lead scoring, and intake routing

- Extracting structured data from unstructured sources such as invoices, emails, and resumes

- Drafting content for human review

- Searching internal knowledge bases, when the knowledge base itself is structured

- Powering workflow-specific assistants

AI creates noise when:

- It replaces a database as the source of truth

- It is asked to make operational decisions without enough business context

- It generates automations that nobody can debug

- It is the first thing built instead of the last

- It is layered on top of scattered, unstructured data and expected to “figure it out”

The distinction is not subtle.

One is AI as a tool.

The other is AI as a fantasy.

The real cost of getting it backwards

When you start with AI and bolt structure on later, the costs show up fast.

- You spend more fixing the foundation than you would have spent building it properly the first time

- The AI output sounds generic because the context feeding it is scattered

- You end up with tech debt disguised as innovation

- Proposal costs balloon; we wrote about this in How to Price No-Code Proposals, where the same project received proposals ranging from $20K to $300K

When you start with structure and add AI strategically, the economics look different.

- AI has something real to work with: clean records, clear relationships, reliable pipelines

- The system is debuggable, maintainable, and owned by the business

- The AI layer can be swapped, upgraded, or removed without rebuilding everything

- You can actually measure whether it helps

This is not anti-AI

We like AI. We use it daily. We build AI workflows for clients.

But we have never seen a business problem solved by AI alone. The solve is always the same: structured data, reliable automations, a UI that works for the team, and then, strategically, some AI. And I don't see AI getting so good that it replaces the need for systems. That'd be terrible for capitalism.

The businesses that will get real value from AI in 2026 and beyond are the ones that stop treating it as the whole answer and start treating it as a layer. A powerful one. A useful one. But still a layer.

Build the system first.

Then add the intelligence.